I was invited to speak at this conference on my work with what I call “semantic listening” – systems that listen to contextual signals, rather than analyzing raw physical signals. In other words, sensors for meaning.

Here is the abstract:

We are already living with a new class of devices, ones that don’t just overhear our conversations, they understand them-–think Google Now, the Moto X (with its always-listening speech-recognition feature), the Amazon Echo, and more. Not far from now, objects that we think of as “ordinary” will have these same abilities. But do you want your every utterance recorded forever? How will you know when you are being recorded?

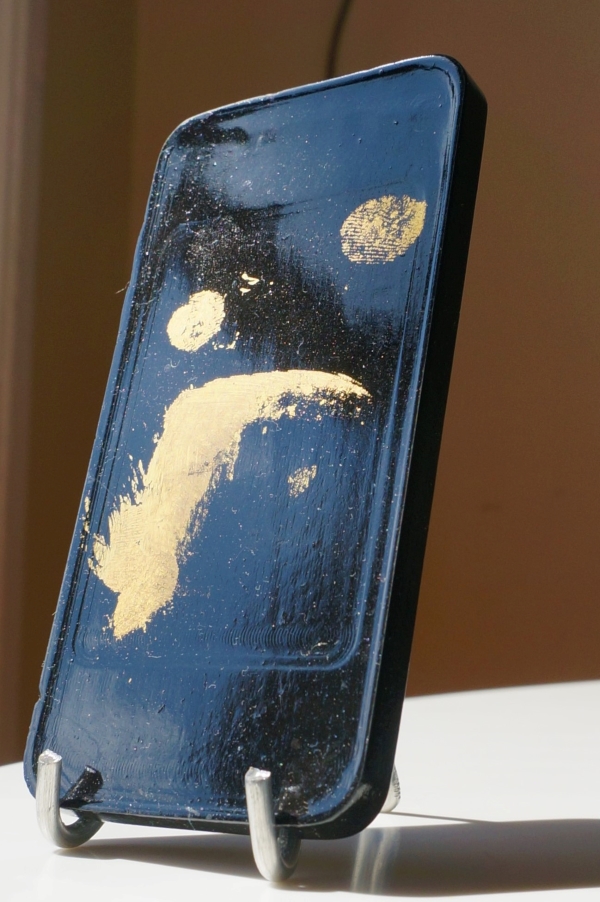

The New York Times R&D Lab made the Listening Table to explore ways that ubiquitous speech capture can actually work for the people who use it (and nobody else). In this talk, I’ll show the design process behind the creation of the Table and connect it to the larger “Semantic Listening” research effort we’re pursuing at the Lab.

After walking through the system architecture and fabrication process, I’ll go into particular detail regarding the balance we struck between ubiquitous capture and user privacy, as these factors will be critical in determining the success or failure of future “enchanted objects.” I’ll end with details about upcoming projects that further explore the potential opportunities and challenges of semantic listening.

Key topics:

Usability – design considerations when building verbose-capture processes: how to show omissions, abstractions, and inferences.

Privacy – how to design the system, both internally and externally, to serve our values: operations happen transparently; people in the room must have maximum agency; and the interface should afford a sense of virtuosity.

Authenticity – making an authentic speculative object involves treading a fine line between making working systems and “fudging” future technologies or interactions. How to navigate this line.

More information here.